📋 About

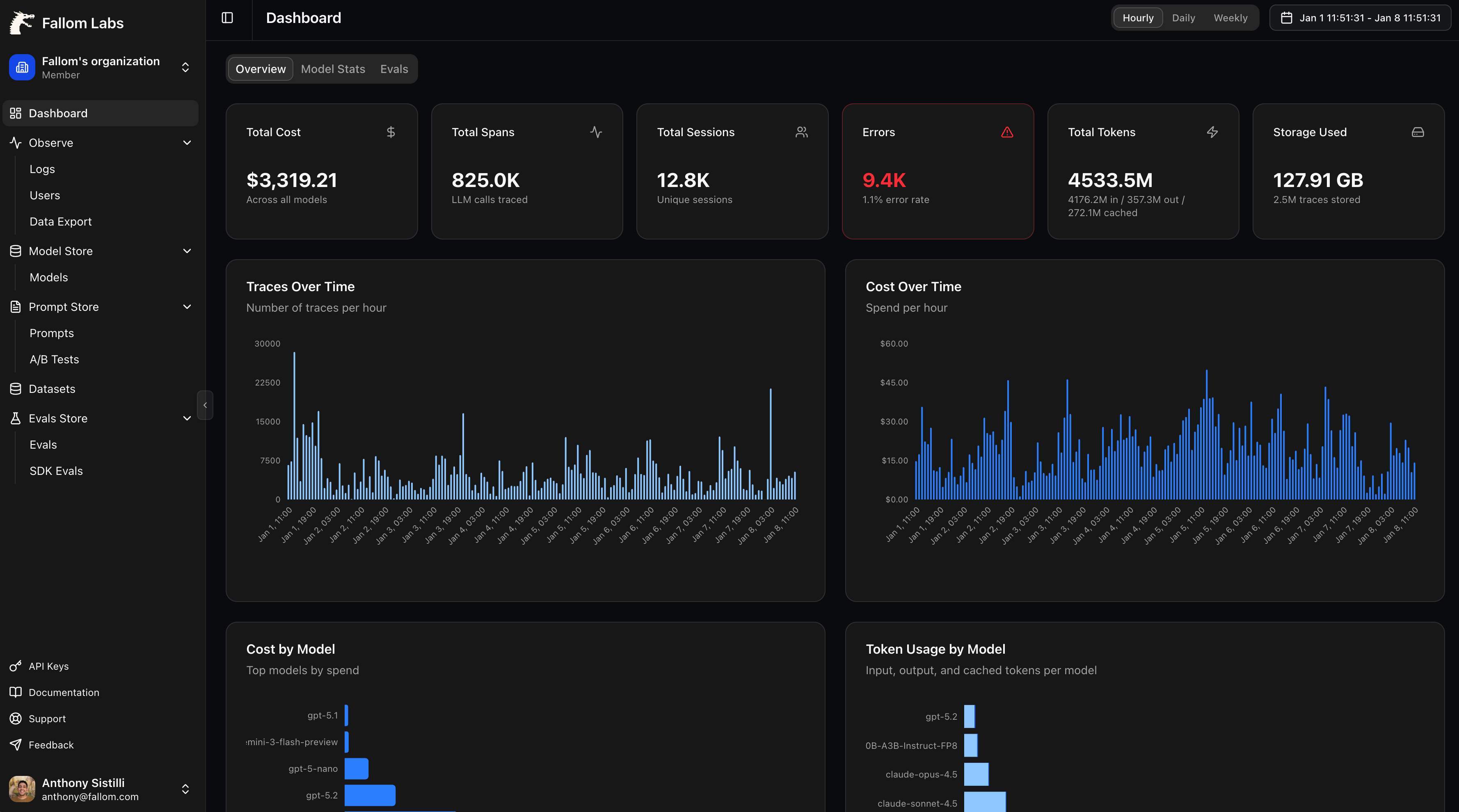

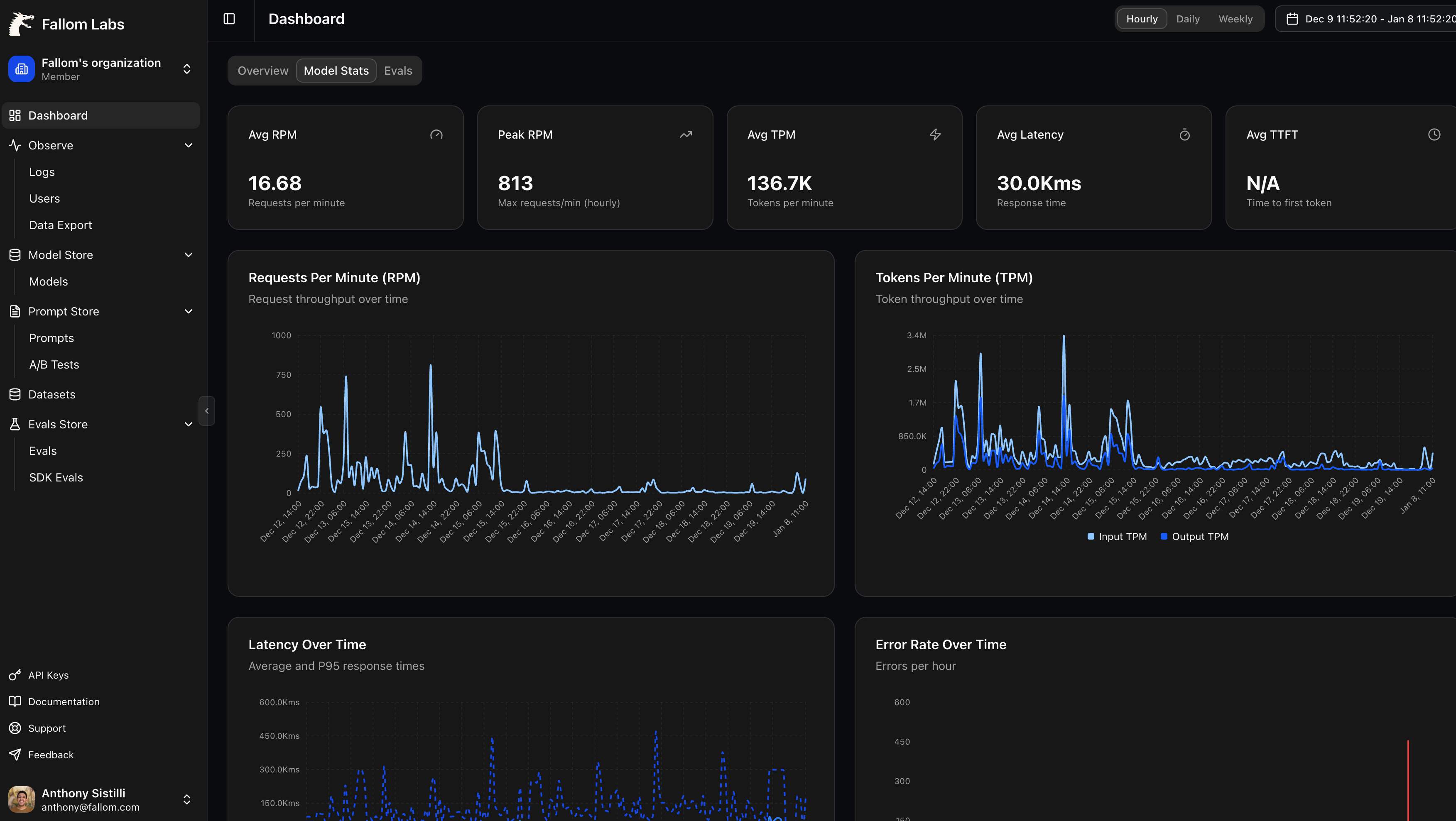

Fallom is an AI-native observability platform for LLM and agent workloads that lets you see every LLM call in production with end-to-end tracing, including prompts, outputs, tool calls, tokens, latency, and per-call cost.

We provide session/user/customer-level context, timing waterfalls for multi-step agents, and enterprise-ready audit trails with logging, model versioning, and consent tracking to support compliance needs.

With a single OpenTelemetry-native SDK, teams can instrument apps in minutes and monitor usag Load more

We provide session/user/customer-level context, timing waterfalls for multi-step agents, and enterprise-ready audit trails with logging, model versioning, and consent tracking to support compliance needs.

With a single OpenTelemetry-native SDK, teams can instrument apps in minutes and monitor usag Load more

×

![Gallery Image]()

Login to post a comment

Login to write a review

No reviews yet. Be the first to review this product!